Today I am giving a talk to scientists [mostly postdocs and grad students] at the Black Mountain laboratories of CSIRO [Australia's national industrial labs].

Here are the slides.

On the personal side there is something "strange" about the location of this talk. It is less than one kilometre from where I grew up and was an undergrad. Back then I never even thought about these issues.

Friday, November 29, 2013

Thursday, November 28, 2013

Another bad metal talk

Today I am giving a talk on bad metals at the 2013 Gordon Godfrey Workshop on Strong Electron Correlations and Spins in Sydney.

Here is the current version of the slides for the talk.

One question I keep getting asked is, "Are these dirty systems?" NO! They are very clean. The bad metal arises purely from electron-electron interactions.

The main results in the talk are in a recent PRL, written with Jure Kokalj.

Here is the current version of the slides for the talk.

One question I keep getting asked is, "Are these dirty systems?" NO! They are very clean. The bad metal arises purely from electron-electron interactions.

The main results in the talk are in a recent PRL, written with Jure Kokalj.

The organic charge transfer salts and the relevant Hubbard model are discussed extensively in a review, written with Ben Powell. However, I stress that this bad metal physics is present in a wide range of strongly correlated electron materials. The organics just provide a nice tuneable system to study.

A recent review of the Finite Temperature Lanczos Method is by Peter Prelovsek and Janez Bonca.

A recent review of the Finite Temperature Lanczos Method is by Peter Prelovsek and Janez Bonca.

Monday, November 25, 2013

The emergence of "sloppy" science

Here "sloppy" science is good science!

How do effective theories emerge?

What is the minimum number of variables and parameters needed to describe some emergent phenomena?

Is there a "blind"/"automated" procedure for determining what the relevant variables and parameters are?

There is an interesting paper in Science

Parameter Space Compression Underlies Emergent Theories and Predictive Models

Benjamin B. Machta, Ricky Chachra, Mark K. Transtrum, James P. Sethna

These issues are not just relevant in physics, but also in systems biology. The authors state:

I have one minor quibble with the first sentence of the paper.

Reference (2) is Anderson's classic "More is different".

I think Wigner's paper is largely about something quite different from emergence, the focus of Anderson's paper. Wigner is primarily concerned with the even more profound philosophical question as to why nature can be described by mathematics at all. I see no scientific answer on the horizon.

How do effective theories emerge?

What is the minimum number of variables and parameters needed to describe some emergent phenomena?

Is there a "blind"/"automated" procedure for determining what the relevant variables and parameters are?

There is an interesting paper in Science

Parameter Space Compression Underlies Emergent Theories and Predictive Models

Benjamin B. Machta, Ricky Chachra, Mark K. Transtrum, James P. Sethna

These issues are not just relevant in physics, but also in systems biology. The authors state:

important predictions largely depend only on a few “stiff” combinations of parameters, followed by a sequence of geometrically less important “sloppy” ones... This recurring characteristic, termed “sloppiness,” naturally arises in models describing collective data (not chosen to probe individual system components) and has implications similar to those of the renormalization group (RG) and continuum limit methods of statistical physics. Both physics and sloppy models show weak dependence of macroscopic observables on microscopic details and allow effective descriptions with reduced dimensionality.The following idea is central to the paper.

The sensitivity of model predictions to changes in parameters is quantified by the Fisher Information Matrix (FIM). The FIM forms a metric on parameter space that measures the distinguishability between a model with parameters theta_m and a nearby model with parameters theta_m + delta theta_m.The authors show that for several specific models, the eigenvalue spectrum of the FIM is dominated by just a few eigenvalues. These eigenvalues are then associated with the key parameters of the theory.

I have one minor quibble with the first sentence of the paper.

"Physics owes its success (1) in large part to the hierarchical character of scientific theories (2)."Reference (1) is Eugene Wigner's famous 1960 essay "The Unreasonable Effectiveness of Mathematics in the Natural Sciences".

Reference (2) is Anderson's classic "More is different".

I think Wigner's paper is largely about something quite different from emergence, the focus of Anderson's paper. Wigner is primarily concerned with the even more profound philosophical question as to why nature can be described by mathematics at all. I see no scientific answer on the horizon.

Update. The authors also have longer papers on the same subject

Mark K. Transtrum; Benjamin B. Machta; Kevin S. Brown; Bryan C. Daniels; Christopher R. Myers; James P. Sethna

(2015)

Review: Information geometry for multiparameter models: new perspectives on the origin of simplicity

Katherine N Quinn, Michael C Abbott, Mark K Transtrum, Benjamin B Machta and James P Sethna

(2022)

Sunday, November 24, 2013

The commuting problem

I am not talking about commuting operators in quantum mechanics.

When considering a job offer, or the relative merits of multiple job offers [a luxury] rarely does one hear discussion of the daily commute associated with the job. Consider the following two options.

A. The prestigious institution is in a large city and due to the high cost of housing you will have to commute for greater than an hour. Furthermore, this commute involves driving in heavy traffic or taking and waiting for crowded public transport.

B. A less prestigious institution offers you on campus [or near campus] housing so you can walk 5-15 minutes to work each day.

The difference is considerable. Option A will waste more than 10 hours of each week and increase your stress and reduce your energy. In light of that you may end up being more productive and successful at B.

It is interesting that

I realise the options often aren't that simple. Furthermore, you may not have a choice. Also, time is not the only factor. A one hour train ride to and from work each day may not be that bad if you can always get a seat and there are tables to work on. Some people really enjoy a 40 minute bicycle ride to and from work each day.

I am just saying it is an issue to consider.

I once had an attractive job offer that I once turned down largely because of commuting . I am glad I did. So is my family.

When considering a job offer, or the relative merits of multiple job offers [a luxury] rarely does one hear discussion of the daily commute associated with the job. Consider the following two options.

A. The prestigious institution is in a large city and due to the high cost of housing you will have to commute for greater than an hour. Furthermore, this commute involves driving in heavy traffic or taking and waiting for crowded public transport.

B. A less prestigious institution offers you on campus [or near campus] housing so you can walk 5-15 minutes to work each day.

The difference is considerable. Option A will waste more than 10 hours of each week and increase your stress and reduce your energy. In light of that you may end up being more productive and successful at B.

It is interesting that

I realise the options often aren't that simple. Furthermore, you may not have a choice. Also, time is not the only factor. A one hour train ride to and from work each day may not be that bad if you can always get a seat and there are tables to work on. Some people really enjoy a 40 minute bicycle ride to and from work each day.

I am just saying it is an issue to consider.

I once had an attractive job offer that I once turned down largely because of commuting . I am glad I did. So is my family.

Thursday, November 21, 2013

Bad metal talk at IISc Bangalore

Today I am giving a seminar in the Physics Department at the Indian Institute of Science in Bangalore.

Here is the current version of the slides.

The main results in the talk are in a recent PRL, written with Jure Kokalj.

Here is the current version of the slides.

The main results in the talk are in a recent PRL, written with Jure Kokalj.

The organic charge transfer salts and the relevant Hubbard model are discussed extensively in a review, written with Ben Powell.

Wednesday, November 20, 2013

The role of universities in nation building

There is a general view that great nations have great universities. This motivates significant public and private investment [both financial and political] in universities.

Unfortunately, these days much of the focus is on universities promoting economic growth.

However, I think equally important are the contributions that universities can make to culture, political stability, and positive social change.

Aside: Much of this discussion assumes a causality: strong universities produce strong nations. However, I think caution is in order here. Sometimes it may be correlation not causality. For example, wealthy nations use their wealth to build excellent universities.

The main purpose of this post is to make two bold claims. For neither claim do I have empirical evidence. But, I think they are worth discussing.

First some nomenclature. In every country the quality of institutions decays with ranking. In different countries that decay rate is different. Roughly the rate decreases from India to Australia to the USA. Below I will distinguish between tier 1 and tier 2 institutions. It is not clear how to define the exact boundary. But, roughly the number of tier 1 would be no more than 50, 10, and 10 for the USA, India, and Australia respectively. Let's not get in a big debate about how big this number is.

So here are the claims.

1. The key institutions for nation building are not the best institutions but the second tier ones.

2. Making second tier institutions effective is much more challenging than first tier institutions.

Let me try and justify each claim.

1. Great nations are not just build by brilliant scientists, writers and entrepreneurs.

Rather they also require effective school teachers, engineers, small business owners,...

Furthermore, you need citizens who are well informed, critical thinkers and engaged in politics and communities. The best universities are populated with highly gifted and motivated faculty and students. Most would be productive and successful, regardless of fancy buildings or high salaries. The best students will learn a lot regardless of the quality of the teaching. You don't need to teach them how to write an essay or to think critically. In contrast, faculty and students at second tier institutions require significantly more nurturing and development.

2. At tier one institutions governments [or private trustees] just need to provide a certain minimal amount of resources and get out of the way. However, tier two institutions are a completely different ball game. Many are characterised by form without substance.

To be concrete, you can write impressive course profiles, assign leading texts, give lectures, and have fancy graduation ceremonies, but at the end students actually learn little. This painful reality is covered up by soft exams and grading. The focus in on rote learning rather than critical thinking.

Faculty may do research in the sense that they get grants, graduate Ph.D students, and publish papers.

However, the "research" and the Ph.D graduates are of such low quality they make little contribution to the nation.

The problem is accentuated by the fact they many of these institutions don't want to face the painful reality of the low quality of their incoming students and so they don't adjust their mission and programs accordingly. They just try to mimic tier one institutions.

Reforming these institutions is particularly difficult because they are largely controlled by career administrators who have no real experience or interest in real scholarship or teaching. Instead, they are obsessed with rankings, metrics, reorganisations, buildings, money, and particularly their own careers.

I think these concerns are just as applicable to countries as diverse as the USA, Australia, and India.

For a perspective on the latter, there is an interesting paper by Sabyasachi Bhattacharya

Indian Science Today: An Indigenously Crafted Crisis He was a recent director of the Tata Institute for Fundamental Research.

I also found helpful a Physics World essay by Shiraz Minwalla.

I welcome comments.

Unfortunately, these days much of the focus is on universities promoting economic growth.

However, I think equally important are the contributions that universities can make to culture, political stability, and positive social change.

Aside: Much of this discussion assumes a causality: strong universities produce strong nations. However, I think caution is in order here. Sometimes it may be correlation not causality. For example, wealthy nations use their wealth to build excellent universities.

The main purpose of this post is to make two bold claims. For neither claim do I have empirical evidence. But, I think they are worth discussing.

First some nomenclature. In every country the quality of institutions decays with ranking. In different countries that decay rate is different. Roughly the rate decreases from India to Australia to the USA. Below I will distinguish between tier 1 and tier 2 institutions. It is not clear how to define the exact boundary. But, roughly the number of tier 1 would be no more than 50, 10, and 10 for the USA, India, and Australia respectively. Let's not get in a big debate about how big this number is.

So here are the claims.

1. The key institutions for nation building are not the best institutions but the second tier ones.

2. Making second tier institutions effective is much more challenging than first tier institutions.

Let me try and justify each claim.

1. Great nations are not just build by brilliant scientists, writers and entrepreneurs.

Rather they also require effective school teachers, engineers, small business owners,...

Furthermore, you need citizens who are well informed, critical thinkers and engaged in politics and communities. The best universities are populated with highly gifted and motivated faculty and students. Most would be productive and successful, regardless of fancy buildings or high salaries. The best students will learn a lot regardless of the quality of the teaching. You don't need to teach them how to write an essay or to think critically. In contrast, faculty and students at second tier institutions require significantly more nurturing and development.

2. At tier one institutions governments [or private trustees] just need to provide a certain minimal amount of resources and get out of the way. However, tier two institutions are a completely different ball game. Many are characterised by form without substance.

To be concrete, you can write impressive course profiles, assign leading texts, give lectures, and have fancy graduation ceremonies, but at the end students actually learn little. This painful reality is covered up by soft exams and grading. The focus in on rote learning rather than critical thinking.

Faculty may do research in the sense that they get grants, graduate Ph.D students, and publish papers.

However, the "research" and the Ph.D graduates are of such low quality they make little contribution to the nation.

The problem is accentuated by the fact they many of these institutions don't want to face the painful reality of the low quality of their incoming students and so they don't adjust their mission and programs accordingly. They just try to mimic tier one institutions.

Reforming these institutions is particularly difficult because they are largely controlled by career administrators who have no real experience or interest in real scholarship or teaching. Instead, they are obsessed with rankings, metrics, reorganisations, buildings, money, and particularly their own careers.

I think these concerns are just as applicable to countries as diverse as the USA, Australia, and India.

For a perspective on the latter, there is an interesting paper by Sabyasachi Bhattacharya

Indian Science Today: An Indigenously Crafted Crisis He was a recent director of the Tata Institute for Fundamental Research.

I also found helpful a Physics World essay by Shiraz Minwalla.

I welcome comments.

Monday, November 18, 2013

The challenge of intermediate coupling

The point here is a basic one. But, it is important to keep in mind.

One might tend to think that in quantum many-body theory the hardest problems are strong coupling ones. Let g denote some dimensionless coupling constant where g=0 corresponds to non-interacting particles. Obviously for large g perturbation theory is most unreliable and progress will be difficult. However, in some problems one can treat 1/g as a perturbative parameter and make progress. But this does require the infinite coupling limit be tractable.

Here are a few examples where strong coupling is actually tractable [but certainly non-trivial]

Intermediate coupling is both a blessing and a curse. It is a blessing because there is lots of interesting physics and chemistry associated with it. It is a curse because it is so hard to make reliable progress.

I welcome suggestions of other examples.

One might tend to think that in quantum many-body theory the hardest problems are strong coupling ones. Let g denote some dimensionless coupling constant where g=0 corresponds to non-interacting particles. Obviously for large g perturbation theory is most unreliable and progress will be difficult. However, in some problems one can treat 1/g as a perturbative parameter and make progress. But this does require the infinite coupling limit be tractable.

Here are a few examples where strong coupling is actually tractable [but certainly non-trivial]

- The Hubbard model at half filling. For U much larger than t, the ground state is a Mott insulator. There is a charge gap and the low-lying excitations are spin excitations that are described by an antiferromagnetic Heisenberg model. Except for the case of frustration, i.e. on a non-bipartite lattice, the system is well understood.

- BEC-BCS crossover in ultracold fermionic atoms, near the unitarity limit.

- The Kondo problem at low temperatures. The system is a Fermi liquid, corresponding to the strong-coupling fixed point of the Kondo model.

- The fractional quantum Hall effect.

- Cuprate superconductors. For a long time it was considered that they are in the large U/t limit [i.e. strongly correlated] and that the Mottness was essential. However, Andy Millis and collaborators argue otherwise, as described here. It is interesting that one gets d-wave superconductivity both from a weak-coupling RG approach and a strong coupling RVB theory.

- Quantum chemistry. Weak coupling corresponds to molecular orbital theory. Strong coupling corresponds to valence bond theory. Real molecules are somewhere in the middle. This is the origin of the great debate about the relative merits of these approaches.

- Superconducting organic charge transfer salts. Many can be described by a Hubbard model on the anisotropic triangular lattice at half filling. Superconductivity occurs in proximity to the Mott transition which occurs for U ~ 8t. Ring exchange terms in the Heisenberg model may be important for understanding spin liquid phases.

- Graphene. It has U ~ bandwidth and long range Coulomb interactions. Perturb it and you could end up with an insulator.

- Exciton transport in photosynthetic systems. The kinetic energy, thermal energy, solvent reorganisation energy, and relaxation frequency [cut-off frequency of the bath] are all comparable.

- Water. This is my intuition but I find it hard to justify. It is not clear to me what the "coupling constants" are.

Intermediate coupling is both a blessing and a curse. It is a blessing because there is lots of interesting physics and chemistry associated with it. It is a curse because it is so hard to make reliable progress.

I welcome suggestions of other examples.

Saturday, November 16, 2013

The silly marketing of an Australian university

Recently, I posted about a laundry detergent I bought in India that features "Vibrating molecules" (TM) and wryly commented that the marketing of some universities is not much better. I saw that this week, again in India. I read that a former Australian cricket captain, Adam Gilchrist, [an even bigger celebrity in India than in Australia], was in Bangalore as a "Brand name Ambassador" for a particular Australian university. The university annually offers one Bradman scholarship to an Indian student for which it pays 50 per cent of the tuition for an undergraduate degree. [Unfortunately, the amount of money spent on the business class airfares associated with the launch of this scholarship probably exceeded the annual value of the scholarship].

Some measure of Gilchrist's integrity is that at the same event sponsored by the university he said he supported the introduction of legalised betting on sports in India. Many in Australia think such betting has been a disaster, leading to government intervention last year.

What is my problem with this? Gilchrist and Bradman may be cricketing legends. However, neither ever went to university or has had any engagement or interest in universities.

Some measure of Gilchrist's integrity is that at the same event sponsored by the university he said he supported the introduction of legalised betting on sports in India. Many in Australia think such betting has been a disaster, leading to government intervention last year.

What is my problem with this? Gilchrist and Bradman may be cricketing legends. However, neither ever went to university or has had any engagement or interest in universities.

Thursday, November 14, 2013

Possible functional role of strong hydrogen bonds in proteins

There is a nice review article Low-barrier hydrogen bonds in proteins

by M.V. Hosur, R. Chitra, Samarth Hegde, R.R. Choudhury, Amit Das, and R.V. Hosur

Most hydrogen bonds in proteins are weak, as characterised by a donor-acceptor distance larger than 2.8 Angstroms, and interaction energies of a few kcal/mol (~0.1 eV~3 k_B T). However, there are some bonds that are much shorter. In particular, Cleland proposed in 1993 that for some enzymes that there are H-bonds that are sufficiently short (R ~ 2.4-2.5 A) that the energy barrier for proton transfer from the donor to acceptor is sufficiently small that it is comparable to the zero-point energy for the donor-H stretch vibration. These are called low-barrier hydrogen bonds. This proposal remains controversial. For example, Ariel Warshel says they have no functional role.

The authors perform extensive analysis of crystal structure databases, for both proteins and small molecules, in order to identify the relative abundance of short bonds, and their location relative to the active sites of proteins. Here are a few things I found interesting.

1. For a strong bond, the zero-point motion along the bond direction will be much larger than in the perpendicular directions. This means that there should be significant anisotropy in the ellipsoid associated with the uncertainty of the hydrogen atom position determined from neutron scattering [ADP = Atomic Displacement Parameter = Debye-Waller factor]. The ellipsoid is generally spherical for normal [i.e., common and weak] H-bonds. They find that anisotropy is correlated with the presence of short bonds and with "matching pK_a's" [i.e., the donor and acceptor have similar chemical identity and proton affinity], as one would expect.

2. For 36 different protein structures they find very few LBHB's. Furthermore, in many the H-bonds identified are away from the active site. But, this may be of significance, as discussed below.

3. A LBHB may play a role in excited state proton transfer in green fluorescent protein, as described here.

4. In HIV-1 protease there is a very short H-bond with no barrier.

5. There is correlation between the location of short H-bonds and the "folding cores" of specific proteins, including HIV-1. These sites are identified through NMR, that allows one to study partially denatured [i.e. unfolded] protein conformations. This suggests short H-bonds may play a functional role in protein folding.

by M.V. Hosur, R. Chitra, Samarth Hegde, R.R. Choudhury, Amit Das, and R.V. Hosur

Most hydrogen bonds in proteins are weak, as characterised by a donor-acceptor distance larger than 2.8 Angstroms, and interaction energies of a few kcal/mol (~0.1 eV~3 k_B T). However, there are some bonds that are much shorter. In particular, Cleland proposed in 1993 that for some enzymes that there are H-bonds that are sufficiently short (R ~ 2.4-2.5 A) that the energy barrier for proton transfer from the donor to acceptor is sufficiently small that it is comparable to the zero-point energy for the donor-H stretch vibration. These are called low-barrier hydrogen bonds. This proposal remains controversial. For example, Ariel Warshel says they have no functional role.

The authors perform extensive analysis of crystal structure databases, for both proteins and small molecules, in order to identify the relative abundance of short bonds, and their location relative to the active sites of proteins. Here are a few things I found interesting.

1. For a strong bond, the zero-point motion along the bond direction will be much larger than in the perpendicular directions. This means that there should be significant anisotropy in the ellipsoid associated with the uncertainty of the hydrogen atom position determined from neutron scattering [ADP = Atomic Displacement Parameter = Debye-Waller factor]. The ellipsoid is generally spherical for normal [i.e., common and weak] H-bonds. They find that anisotropy is correlated with the presence of short bonds and with "matching pK_a's" [i.e., the donor and acceptor have similar chemical identity and proton affinity], as one would expect.

2. For 36 different protein structures they find very few LBHB's. Furthermore, in many the H-bonds identified are away from the active site. But, this may be of significance, as discussed below.

3. A LBHB may play a role in excited state proton transfer in green fluorescent protein, as described here.

4. In HIV-1 protease there is a very short H-bond with no barrier.

5. There is correlation between the location of short H-bonds and the "folding cores" of specific proteins, including HIV-1. These sites are identified through NMR, that allows one to study partially denatured [i.e. unfolded] protein conformations. This suggests short H-bonds may play a functional role in protein folding.

Monday, November 11, 2013

Tata Colloquium on organic Mott insulators

Tomorrow I am giving the theory colloquium at the Tata Institute for Fundamental Research in Mumbai. My host is Kedar Damle.

Here are the slides for "Frustrated Mott insulators: from quantum spin liquids to superconductors".

A related review article was co-authored with Ben Powell.

Here are the slides for "Frustrated Mott insulators: from quantum spin liquids to superconductors".

A related review article was co-authored with Ben Powell.

Saturday, November 9, 2013

Towards effective scientific publishing and career evaluation by 2030?

Previously I have argued that Science is broken and raised the question, Have journals become redundant and counterproductive?. Reading these earlier posts is recommended to better understand this post.

Some of the problems that need to be addressed are:

It is always much easier to identify problems than to provide constructive and realistic solutions.

Here is my proposal for a possible way forward. I hope others will suggest even better approaches.

1. Abolish journals. They are an artefact of the pre-internet world and are now doing more harm than good.

2. Scientists will post papers on the arXiv.

3. Every scientist receives only 2 paper tokens per year. This entitles them to post two single author [or four dual author, etc.] papers per year on the arXiv. Unused credits can be carried over to later years. There will be no limit to the paper length. Overall, this should increase the quality of papers and will remove the problem of "honorary" authors.

4. To keep receiving 2 tokens per year, each scientist must write a commentary on 2 other papers. Multiple author commentaries are allowed. These commentaries can include new results checking the results of the commented on paper. Authors of the original can write responses.

5. When people apply for jobs, grants, and promotion they will submit their publication list and their commentary list. They will be evaluated on the quality of both. Those evaluating the candidate will find the quantity and quality of commentaries on their papers very useful.

The above draft proposal is far from perfect and I can already think of silly things that people will do to publish more... However, for all its faults I sincerely believe that this system would be vastly better than the current one.

The first step is to get the arXiv to allow commentaries to be added. But, this will only become really effective when there is a career incentive for people to write careful critical and detailed commentaries.

So, fire away! I welcome comment and alternative suggestions.

Some of the problems that need to be addressed are:

- journals are wasting a lot of time and money

- rubbish and mediocrity is getting published, sometimes in "high impact" journals

- honorary authorship leads to long author lists and misplaced credit

- increasing emphasis on "sexy" speculative results

- metrics are taking priority over rigorous evaluation

- negative results or confirmatory studies don't get published

- lack of transparency of the refereeing and editorial process

- .....

It is always much easier to identify problems than to provide constructive and realistic solutions.

Here is my proposal for a possible way forward. I hope others will suggest even better approaches.

1. Abolish journals. They are an artefact of the pre-internet world and are now doing more harm than good.

2. Scientists will post papers on the arXiv.

3. Every scientist receives only 2 paper tokens per year. This entitles them to post two single author [or four dual author, etc.] papers per year on the arXiv. Unused credits can be carried over to later years. There will be no limit to the paper length. Overall, this should increase the quality of papers and will remove the problem of "honorary" authors.

4. To keep receiving 2 tokens per year, each scientist must write a commentary on 2 other papers. Multiple author commentaries are allowed. These commentaries can include new results checking the results of the commented on paper. Authors of the original can write responses.

5. When people apply for jobs, grants, and promotion they will submit their publication list and their commentary list. They will be evaluated on the quality of both. Those evaluating the candidate will find the quantity and quality of commentaries on their papers very useful.

The above draft proposal is far from perfect and I can already think of silly things that people will do to publish more... However, for all its faults I sincerely believe that this system would be vastly better than the current one.

The first step is to get the arXiv to allow commentaries to be added. But, this will only become really effective when there is a career incentive for people to write careful critical and detailed commentaries.

So, fire away! I welcome comment and alternative suggestions.

Thursday, November 7, 2013

Emergence of dynamical particle-hole asymmetry II

This follows an earlier post Emergence of dynamical particle-hole asymmetry concerning the new approach of Sriram Shastry to doped Mott insulators, describing them as an extremely correlated Fermi liquid, and characterised by two self energies.

There is a nice preprint

Extremely correlated Fermi liquid theory meets Dynamical mean-field theory: Analytical insights into the doping-driven Mott transition

Rok Zitko, D. Hansen, Edward Perepelitsky, Jerne Mravlje, Antoine Georges, Sriram Shastry

The part ice-hole asymmetry means that electron-like quasi-particles have much longer lifetimes than hole-like quasi-particles. This means that the imaginary part of the (single-particle Dyson) self energy Sigma(omega) is asymmetric about omega=0, the chemical potential.

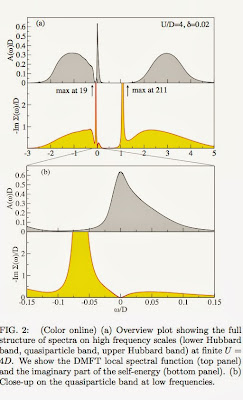

Here are two particularly note-worthy figures from the paper.

The first shows how the asymmetry at omega=0 is associated with the quasi-particle band (QPB) at the top of the lower Hubbard band (LHB). This band emerges as the Mott insulator is doped. The suppression of the density of states A(omega) between the QPB and LHB, means that Im Sigma(omega) must be very large in that energy/frequency range (omega ~ -0.01). A similar requirement does not hold for positive omega, leading to the large electron-hole asymmetry.

The figure below shows a fit of the frequency dependence of the imaginary part of the self energy, calculated using Dynamical Mean-Field Theory (DMFT), to a form given by the Extremely Correlated Fermi Liquid (ECFL) theory.

Note the large particle-hole asymmetry and how it increases with decreasing doping. A regular Fermi liquid would simply have an inverted parabola with a maximum at omega=0.

I thank Rok, Jerne, and Sriram for helpful discussions about their work.

There is a nice preprint

Extremely correlated Fermi liquid theory meets Dynamical mean-field theory: Analytical insights into the doping-driven Mott transition

Rok Zitko, D. Hansen, Edward Perepelitsky, Jerne Mravlje, Antoine Georges, Sriram Shastry

The part ice-hole asymmetry means that electron-like quasi-particles have much longer lifetimes than hole-like quasi-particles. This means that the imaginary part of the (single-particle Dyson) self energy Sigma(omega) is asymmetric about omega=0, the chemical potential.

Here are two particularly note-worthy figures from the paper.

The first shows how the asymmetry at omega=0 is associated with the quasi-particle band (QPB) at the top of the lower Hubbard band (LHB). This band emerges as the Mott insulator is doped. The suppression of the density of states A(omega) between the QPB and LHB, means that Im Sigma(omega) must be very large in that energy/frequency range (omega ~ -0.01). A similar requirement does not hold for positive omega, leading to the large electron-hole asymmetry.

The figure below shows a fit of the frequency dependence of the imaginary part of the self energy, calculated using Dynamical Mean-Field Theory (DMFT), to a form given by the Extremely Correlated Fermi Liquid (ECFL) theory.

Note the large particle-hole asymmetry and how it increases with decreasing doping. A regular Fermi liquid would simply have an inverted parabola with a maximum at omega=0.

I thank Rok, Jerne, and Sriram for helpful discussions about their work.

A company that needs to clean up its act

This week I bought this laundry detergent in India

Note how at the bottom it features the TradeMark, "Vibrating molecules", giving it Power!

It is interesting/amusing to read the Wikipedia page for Surf Excel. It is full of similar marketing nonsense.

Scientists might mock this, but some university marketing campaigns are comparable.

Tuesday, November 5, 2013

Bad taste and the sins of academia

There is a very thoughtful article in Angewandte Chemie

The Seven Sins in Academic Behavior in the Natural Sciences

by Wilfred F. van Gunsteren

It is worth reading slowly and in full. He highlights the negative influence of "high impact" journals and discusses many of the same issues as the recent cover story in the Economist. He has some nice examples of each of seven sins.

But, there was one paragraph that really stood out.

I guess ETH-Zurich [where van Guntersen just retired from] operates in a different manner. Previously, I posted about the criteria that Stanford uses for tenure. So universities that want to be "world class" might want to "follow best practise".

The Seven Sins in Academic Behavior in the Natural Sciences

by Wilfred F. van Gunsteren

It is worth reading slowly and in full. He highlights the negative influence of "high impact" journals and discusses many of the same issues as the recent cover story in the Economist. He has some nice examples of each of seven sins.

But, there was one paragraph that really stood out.

Administrative officials at universities and other academic institutions should refrain from issuing detailed regulations that may stifle the creativity and adventurism on which research depends. They should rather foster discussion about basic principles and appropriate behavior, and judge their staff and applicants for jobs based on their curiosity-driven urge to do research, understand, and share their knowledge rather than on superficial aspects of academic research such as counting papers or citations or considering a person’s grant income or h-index or whatever ranking, which generally only reflect quantity and barely quality. If the curriculum vitae of an applicant lists the number of citations or an h-index value or the amount of grant money gathered, one should regard this as a sign of superficiality and misunderstanding of the academic research endeavor, a basic flaw in academic attitude, or at best as a sign of bad taste.Wow! This is so unlike the standard (and unquestioned) mode of operation in Australia and many other countries.

I guess ETH-Zurich [where van Guntersen just retired from] operates in a different manner. Previously, I posted about the criteria that Stanford uses for tenure. So universities that want to be "world class" might want to "follow best practise".

Friday, November 1, 2013

Quantum of thermal conductance

Here are a couple of things I find surprising about the electronic transport properties of materials.

1. One cannot simply have materials, particularly metals, that have any value imaginable for a transport coefficient. For example, one cannot make the conductance or the thermopower as large as one wishes by designing some fantastic material.

2. Quantum mechanics determines what these fundamental limits are. Furthermore, the limiting values of transport coefficients are often set in terms of fundamental constants [Planck's constant, Boltzmann's constant, charge on an electron].

The fact that this is profound is indicated by the fact that this was not appreciated until about 25 years ago. A nice clean example is the case of a quantum point contact with N channels. The conductance must be N times the quantum of conductance, 2e^2/h. This result was proposed by Rolf Landauer in 1957 but many people did not believe it until the first experimental confirmation in 1988.

The thermal conductance through a point contact should also be quantised. The quantum of thermal conductance is

Asides:

1. note that the Wiedemann-Franz ratio is satisfied.

2. this sets the scale for the thermal conductivity of a bad metal.

A paper in Science this week reports the experimental observation of this quantisation.

1. One cannot simply have materials, particularly metals, that have any value imaginable for a transport coefficient. For example, one cannot make the conductance or the thermopower as large as one wishes by designing some fantastic material.

2. Quantum mechanics determines what these fundamental limits are. Furthermore, the limiting values of transport coefficients are often set in terms of fundamental constants [Planck's constant, Boltzmann's constant, charge on an electron].

The fact that this is profound is indicated by the fact that this was not appreciated until about 25 years ago. A nice clean example is the case of a quantum point contact with N channels. The conductance must be N times the quantum of conductance, 2e^2/h. This result was proposed by Rolf Landauer in 1957 but many people did not believe it until the first experimental confirmation in 1988.

The thermal conductance through a point contact should also be quantised. The quantum of thermal conductance is

Asides:

1. note that the Wiedemann-Franz ratio is satisfied.

2. this sets the scale for the thermal conductivity of a bad metal.

A paper in Science this week reports the experimental observation of this quantisation.

Subscribe to:

Comments (Atom)

Tony Leggett (1938-2026): condensed matter theorist

Tony Leggett died last week. The New York Times has a nice obituary. One measure of his influence on me is that more than 20 posts on this ...

-

This week Nobel Prizes will be announced. I have not done predictions since 2020 . This is a fun exercise. It is also good to reflect on w...

-

Is it something to do with breakdown of the Born-Oppenheimer approximation? In molecular spectroscopy you occasionally hear this term thro...

-

Nitrogen fluoride (NF) seems like a very simple molecule and you would think it would very well understood, particularly as it is small enou...