Thanks to the ingenuity of synthetic chemists

metallic-organic frameworks (MOFs) represent a fascinating class of materials with many potential technological applications.

Previously, I have posted about

spin-crossover,

self-diffusion of small hydrocarbons, and the

lack of reproducibility of CO2 absorption measurements in these materials.

At the last condensed matter theory group meeting we had an open discussion about this JACS paper.

Metallic Conductivity in a Two-Dimensional Cobalt Dithiolene Metal−Organic Framework

Andrew J. Clough, Jonathan M. Skelton, Courtney A. Downes, Ashley A. de la Rosa, Joseph W. Yoo, Aron Walsh, Brent C. Melot, and Smaranda C. Marinescu

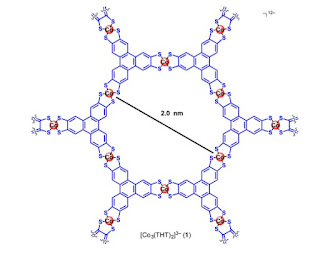

The basic molecular unit is shown below. These molecules stack on top of one another, producing a layered crystal structure. DFT calculations suggest that the largest molecular overlap (and conductivity) is in the stacking direction.

Within the layers the MOF has the structure of a honeycomb lattice.

The authors measured the resistivity of several different samples as a function of temperature. The results are shown below. The distances correspond to the size of the compressed powder pellets.

Based on the observation that the resistivity is a non-monotonic function of temperature they suggest that as the temperature decreases there is a transition from an insulator to a metal. Since there is no hysteresis they rule out a first-order phase transition, as is observed in vanadium oxide, VO2.

They claim that the material is an insulator about about 150 K, based on fitting the resistivity versus temperature to an activated form, deducing an energy gap of about 100 meV. However, one should note the following.

1. It is very difficult to accurately measure the resistivity of materials, particularly anisotropic ones. Some people spend their whole career focussing on doing this well.

2. Measurements on powder pellets will contain a mixture of the effects of the crystal anisotropy, random grain directions, intergrain conductivity, and contact resistances. This is reflected in how sample dependent the results are above.

3. The measured resistivity is orders of magnitude larger than the Mott-Ioffe-Regel limit. suggesting the samples are very "dirty" or one is not measuring the intrinsic conductivity or this is a very bad metal due to electron correlations.

4. It is debatable whether one can deduce activated behaviour from only an order of magnitude variation in resistance, due to the narrow temperature range considered.

The temperature dependence of the magnetic susceptibility is shown below, and taken from the Supplementary material.

The authors fit this to a sum of several terms, including a constant term and a Curie-Weiss term. The latter gives a magnetic moment associated with S=1/2, as expected for the cobalt ions, and an antiferromagnetic exchange interaction J ~ 100 K. This is what you expect if the system is a Mott insulator or a very bad metal, close to a Mott transition.

Again, there a few questions one should be concerned about.

1. How does this relate to the claim of a metal at low temperatures?

2. The problem of curve fitting. Can one really separate out the different contributions?

3. Are the low moments due to magnetic impurities?

The published DFT-based calculations suggest the material should be a metal because the bands are partially full. Electron correlations could change that. The band structure is quasi-one-dimensional with the most conducting direction perpendicular to the plane of the molecules.

All these questions highlight to me the problem of multi-disciplinary papers. Should you believe physical measurements published by chemists? Should you believe chemical compositions claimed by physicists? Should you believe theoretical calculations performed by experimentalists? We need each other and due diligence, caution, and cross-checking.

Having these discussions in group meetings is important, particularly for students to see they should not automatically believe what they read in "high impact" journals?

An important next step is to come up with a well-justified effective lattice Hamiltonian.