In 2016, when I saw the first results from the LIGO gravitational wave interferometer my natural caution and skepticism kicked in. They had just observed one signal in an incredibly sensitive measurement. A lot of data analysis was required to extract the signal from the background noise. That signal was then fitted the results of numerical simulations of the solutions to Einstein's gravitational field equations describing the merger of two black holes. Depending on how you count about 15 parameters are required to specify the parameters of the binary system [distance from earth, masses, relative orientations of orbits, .... The detection events involve displacement of the mirrors in the interferometer by about 30 picometres!

What on earth could go wrong?!

After all, this was only two years after the BICEP2 fiasco which claimed to have detected anisotropies in the cosmic microwave background due to gravitational waves associated with cosmic inflation. The observed signal turned out to be just cosmic dust! It led to a book, by the cosmologist Brian Keating, Losing the Nobel Prize: A Story of Cosmology, Ambition, and the Perils of Science’s Highest Honor

Well, I am happy to be wrong, if it is good for science. Now almost one hundred gravitational wave events have been observed and one event GW170817 has been correlated with an x-ray observation.

But detecting some gravitational waves is quite a long way from gravitational wave astronomy, i.e, using gravity wave detectors as a telescope, in the same sense as the regular suite of optical, radio, X-ray, ... detectors. I was also skeptical about that. But it does not seem that gravity wave detectors are providing a new window into the universe.

A few weeks ago I heard a very nice UQ colloquium by Paul Lasky, What's next in gravitational wave astronomy?

Paul gave a nice overview of the state of the field, both past and future.

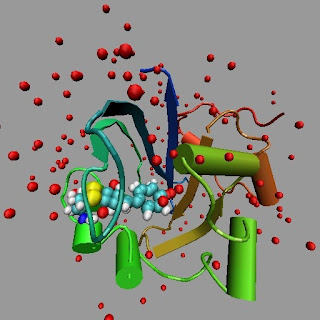

A key summary figure is below. It shows different possible futures when two neutron stars merge.

The figure is taken from the helpful review

The evolution of binary neutron star post-merger remnants: a review, Nikhil Sarin and Paul D. Lasky

A few of the things that stood out to me.

1. One stunning piece of physics is that in the black hole mergers that have been observed the combined mass of the resulting black hole is three solar masses less than the total mass of the two separate black holes. The resulting loss of mass energy (E=mc^2) of three solar masses is converted into gravitational wave energy within seconds. During this time the peak radiant power was more than fifty times the power of all the stars in the observable universe combined!

I have fundamental questions about a clear physical description of this energy conversion process. First, defining "energy" in general relativity is a vexed and unresolved question with a long history. Second, is there any sense in which needs to describe this in terms of a quantum field theory: specifically conversion of neutron matter into gravitons?

2. Probing nuclear astrophysics in neutron stars. It may be possible to test the equation of state (relation between pressure and density) of nuclear matter. This determines the Tolman–Oppenheimer–Volkoff limit; the upper bound to the mass of cold, non-rotating neutron stars. According to Sarin and Lasky

The supramassive neutron star observations again provide a tantalising way of developing our understanding of the dynamics of the nascent neutron star and the equation of state of nuclear matter (e.g., [37,121,127–131]). The procedure is straight forward: if we understand the progenitor mass distribution (which we do not), as well as the dominant spin down mechanism (we do not understand that either), and the spin-down rate/braking index (not really), then we can rearrange the set of equations governing the system’s evolution to find that the time of collapse is a function of the unknown maximum neutron star mass, which we can therefore infer. This procedure has been performed a number of times in different works, each arriving at different answers depending on the underlying assumptions at each of the step. The vanilla assumptions of dipole vacuum spin down of hadronic stars does not well fit the data [37,127], leading some authors to infer that quark stars, rather than hadronic stars, best explain the data (e.g., [129,130]), while others infer that gravitational radiation dominates the star’s angular momentum loss rather than magnetic dipole radiation (e.g [121,127]).

As the authors say, this is a "tantalising prospect" but there are many unkowns. I appreciate their honesty.

3. Probing the phase diagram of Quantum Chromodynamics (QCD)

This is one of my favourite phase diagrams and I used to love to show it to undergraduates.

Neutron stars are close to the first-order phase transition associated with quark deconfinement.

When the neutron stars merge it may be that the phase boundary is crossed.