Einstein’s theory of General Relativity successfully describes gravity and large scales of length and mass. In contrast, quantum theory describes small scales of length and mass. Emergence is central to most attempts to unify the two theories. Before considering specific examples, it is useful to make some distinctions.

First, a quantum theory of gravity is not necessarily the same as a theory to unify gravity with the three other forces described by the Standard Model. Whether the two problems are inextricable is unknown.

Second, there are two distinct possibilities on how classical gravity might emerge from a quantum theory. In Einstein’s theory of General Relativity, space-time and gravity are intertwined. Consequently, the two possibilities are as follows.

i. Space-time is not emergent. Classical General Relativity emerges from an underlying quantum field theory describing fields at small length scales, probably comparable to the Planck length.

ii. Space-time emerges from some underlying granular structure. In some limit, classical gravity emerges with the space-time continuum.

Third, there are "bottom-up" and "top-down" approaches to discovering how classical gravity emerges from an underlying quantum theory, as was emphasised by Bei Lok Hu.

Finally, there is the possibility that quantum theory itself is emergent, as discussed in an earlier post about the quantum measurement problem. Some proposals of Emergent Quantum Mechanics (EQM) attempt to include gravity.

I now mention several different approaches to quantum gravity and for each point out how they fit into the distinctions above.

Gravitons and semi-classical theory

A simple bottom-up approach is to start with classical General Relativity and consider gravitational waves as the normal modes of oscillation of the space-time continuum. They have a linear dispersion relation and move with the speed of light. They are analogous to sound waves in an elastic medium. Semi-classical quantisation of gravitational waves leads to gravitons which are a massless spin-2 field. They are the analogue of phonons in a crystal or photons in the electromagnetic vacuum. However, this reveals nothing about an underlying quantum theory, just as phonons with a linear dispersion relation reveal nothing about the underlying crystal structure.

On the other hand, one can start with a massless spin-2 quantum field and consider how it scatters off massive particles. In the 1960s, Weinberg showed that gauge invariance of the scattering amplitudes implied the equivalence principle (inertial and gravitational mass are identical) and the Einstein field equations. In a sense, this is a top-down approach, as it is a derivation of General Relativity from an underlying quantum theory. In passing, I mention Weinberg used a similar approach to derive charge conservation and Maxwell’s equations of classical electromagnetism, and classical Yang-Mills theory for non-abelian gauge fields.

Weinberg pointed out that this could go against his reductionist claim that in the hierarchy of the sciences, the arrows of the explanation always point down, saying “sometimes it isn't so clear which way the arrows of explanation point… Which is more fundamental, general relativity or the existence of particles of mass zero and spin two?”

More recently, Weinberg discussed General Relativity as an effective field theory

"... we should not despair of applying quantum field theory to gravitation just because there is no renormalizable theory of the metric tensor that is invariant under general coordinate transformations. It increasingly seems apparent that the Einstein–Hilbert Lagrangian √gR is just the least suppressed term in the Lagrangian of an effective field theory containing every possible generally covariant function of the metric and its derivatives..."

This is a bottom-up approach. Weinberg then went on to discuss a top-down approach:

“it is usually assumed that in the quantum theory of gravitation, when Λ reaches some very high energy, of the order of 10^15 to 10^18 GeV, the appropriate degrees of freedom are no longer the metric and the Standard Model fields, but something very different, perhaps strings... But maybe not..."

String theory

Versions of string theory from the 1980s aimed to unify all four forces. They were formulated in terms of nine spatial dimensions and a large internal symmetry group, such as SO(32), where supersymmetric strings were the fundamental units. In the low-energy limit, vibrations of the strings are identified with elementary particles in four-dimensional space-time. A particle with mass zero and spin two appears as an immediate consequence of the symmetries of the string theory. Hence, this was originally claimed to be a quantum theory of gravity. However, subsequent developments have found that there are many alternative string theories and it is not possible to formulate the theory in terms of a unique vacuum.

AdS-CFT correspondence

In the context of string theory, this correspondence conjectures a connection (a dual relation) between classical gravity in Anti-deSitter space-time (AdS) and quantum conformal field theories (CFTs), including some gauge theories. This connection could be interpreted in two different ways. One is that space-time emerges from the quantum theory. Alternatively, the quantum theory emerges from the classical gravity theory. This ambiguity of interpretation has been highlighted by Alyssa Ney, a philosopher of physics. In other words, it is ambiguous which of the two sides of the duality is the more fundamental. Witten has argued that AdS-CFT suggests that gauge symmetries are emergent. However, I cannot follow his argument.

Seiberg reviewed different approaches, within the string theory community, that lead to spacetime as emergent. An example of a toy model is a matrix model for quantum mechanics [which can be viewed as a zero-dimensional field theory]. Perturbation expansions can be viewed as discretised two-dimensional surfaces. In a large N limit, two-dimensional space and general covariance (the starting point for general relativity) both emerge. Thus, this shows how both two-dimensional gravity and spacetime can be emergent. However, this type of emergence is distinct from how low-energy theories emerge. Seiberg also notes that there are no examples of toy models where time (which is associated with locality and causality) is emergent.

Loop quantum gravity

This is a top-down approach where both space-time and gravity emerge together from a granular structure, sometimes referred to as "spin foam" or a “spin network”, and has been reviewed by Rovelli. The starting point is Ashtekar’s demonstration that General Relativity can be described using the phase space of an SU(2) Yang-Mills theory. A boundary in four-dimensional space-time can be decomposed into cells and this can be used to define a dual graph (lattice) Gamma. The gravitational field on this discretised boundary is represented by the Hilbert space of a lattice SU(2) Yang-Mills theory. The quantum numbers used to define a basis for this Hilbert space are the graph Gamma, the “spin” [SU(2) quantum number] associated with the face of each cell, and the volumes of the cells. The Planck length limits the size of the cells. In the limit of the continuum and then of large spin, or vice versa, one obtains General Relativity.

Quantum thermodynamics of event horizons

A bottom-up approach was taken by Padmanabhan. He emphasises Boltzmann's insight: "matter can only store and transfer heat because of internal degrees of freedom". In other words, if something has a temperature and entropy then it must have a microstructure. He does this by considering the connection between event horizons in General Relativity and the temperature of the thermal radiation associated with them. He frames his research as attempting to estimate Avogadro’s number for space-time.

The temperature and entropy associated with event horizons has been calculated for the following specific space-times:

a. For accelerating frames of reference (Rindler space-time) there is an event horizon which exhibits Unruh radiation with a temperature that was calculated by Fulling, Davies and Unruh.

b. The black hole horizon in the Schwarzschild metric has the temperature of Hawking radiation.

c. The cosmological horizon in deSitter space is associated with a temperature proportional to the Hubble constant H, as discussed in detail by Gibbons and Hawking.

Padmanabhan considers the number of degrees of freedom on the boundary of the event horizon, Ns, and in the bulk, Nb. He argues for the holographic principle that Ns = Nb. On the boundary surface, there is one degree of freedom associated with every Planck area, Ns = A/Lp2, where Lp is the Planck length and A is the surface area, which is related to the entropy of the horizon, as first discussed by Bekenstein and Hawking. In the bulk, classical equipartition of energy is assumed so the bulk energy E = Nb k T/2.

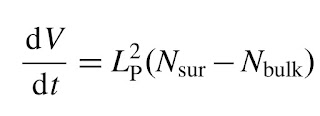

Padmanabhan gives an alternative perspective on cosmology through a novel derivation of the dynamic equations for the scale factor R(t) in the Friedmann-Robertson-Walker metric of the universe in General Relativity. His starting point is a simple argument leading to

V is the Hubble volume, 4\pi/3H^3, where H is the Hubble constant, and Lp is the Planck length. The right-hand side is zero for the deSitter universe, which is predicted to be the asymptotic state of our current universe.

He presents an argument that the cosmological constant is related to the Planck length, leading to the expression

where mu is of order unity and gives a value consistent with observation.

.jpg)